Contributions

- We propose SysNav, a three-level object navigation system that decouples semantic reasoning, navigation planning, and motion control. This design enables cross-embodiment generalization across wheeled robots, quadrupeds, and humanoids, allowing each component to focus on its respective strengths.

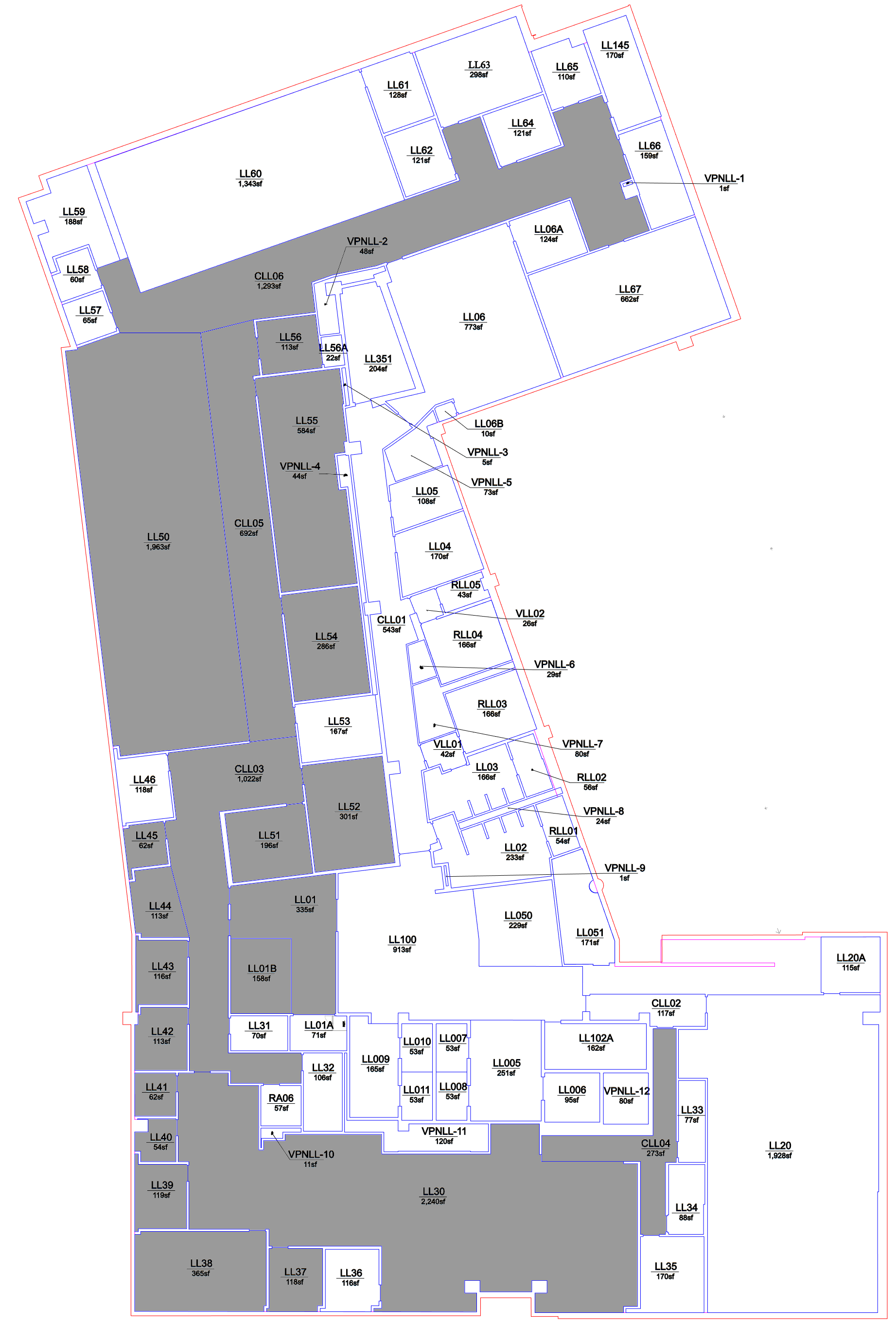

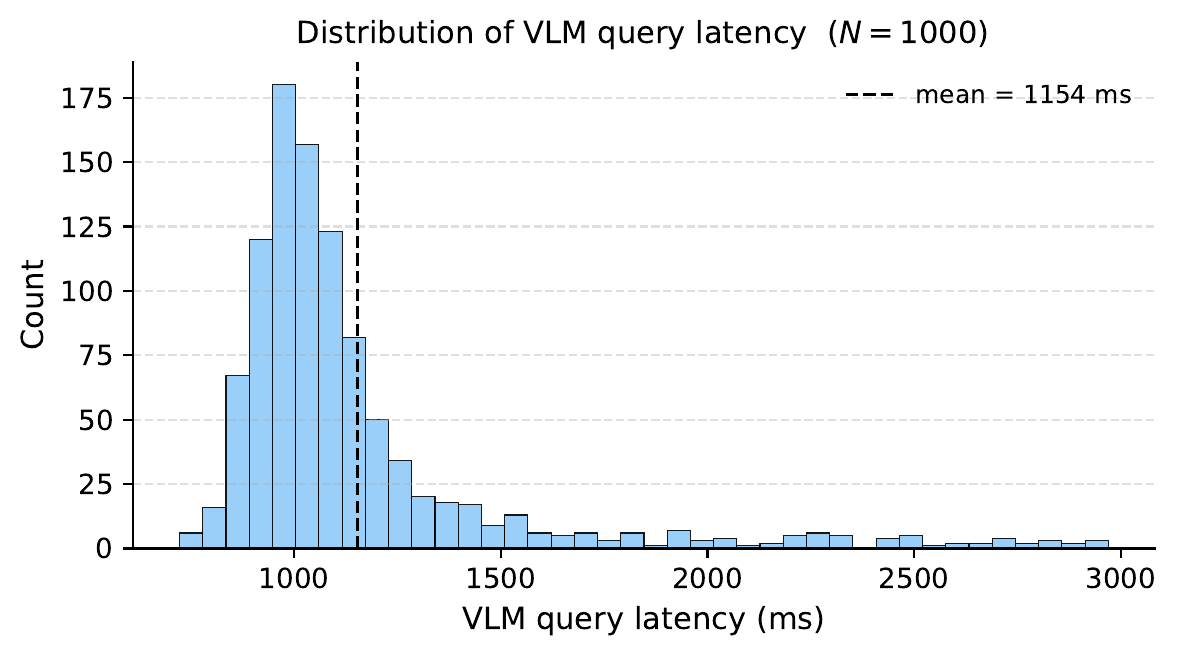

- We design a hierarchical navigation strategy that treats rooms as minimal decision-making units. The VLM performs high-level semantic reasoning over a structured scene representation for room-level decisions, while efficient classical exploration methods handle in-room navigation, leveraging both VLM's semantic strengths and the spatial structure of indoor environments.

- Our system not only supports standard object navigation but also enables conditional object navigation—such as navigation conditioned on object attributes or spatial relations—through its structured scene representation.

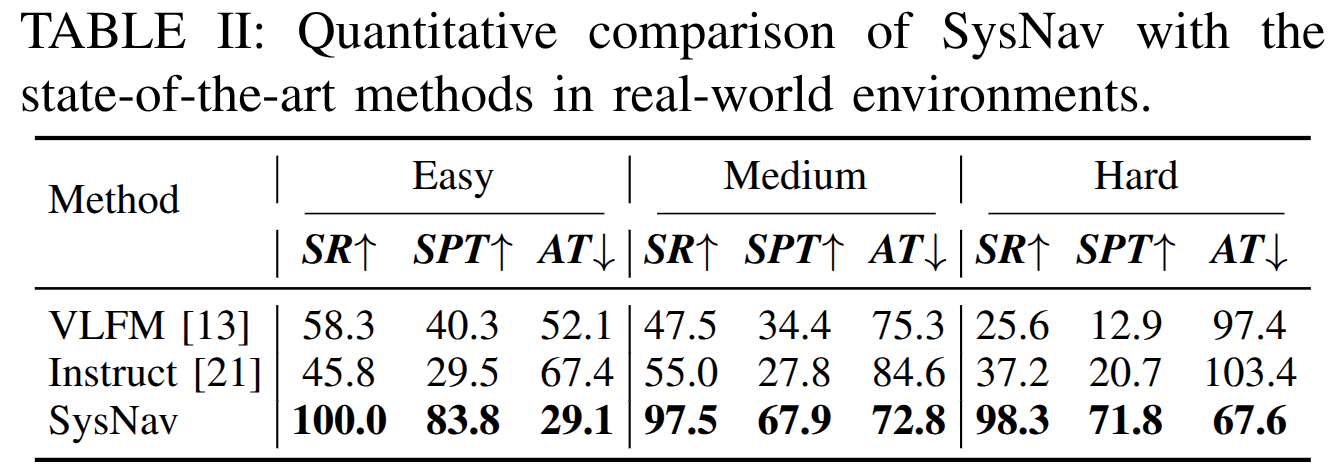

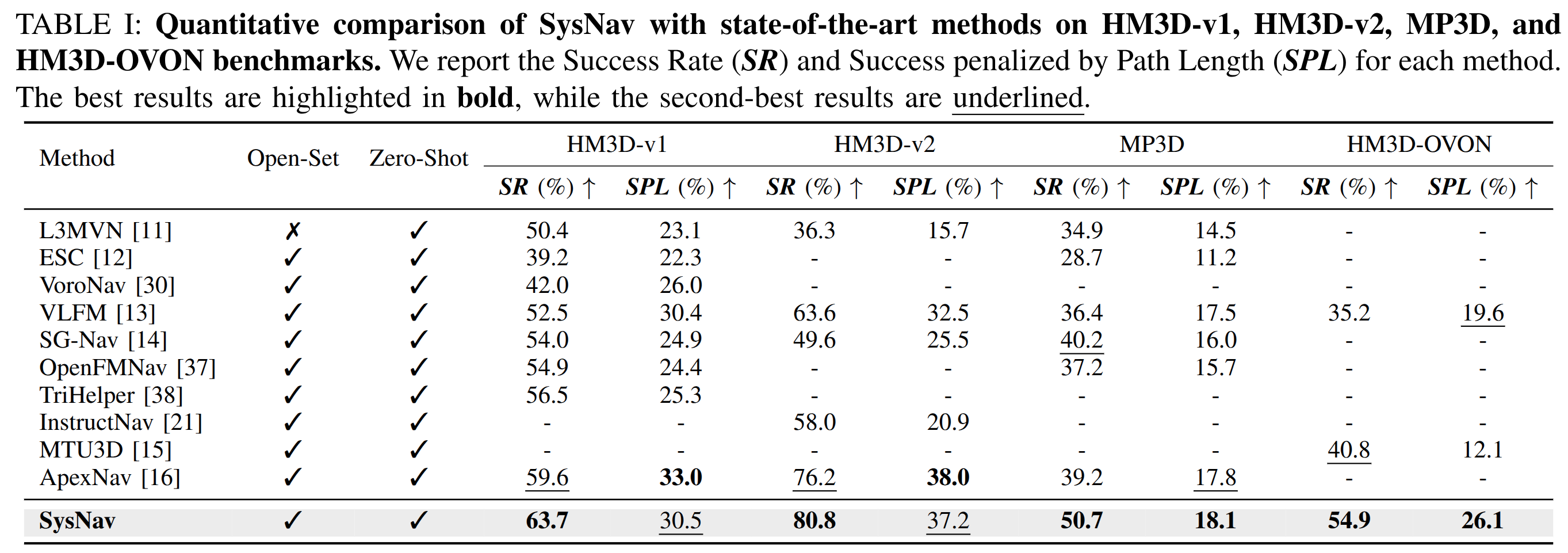

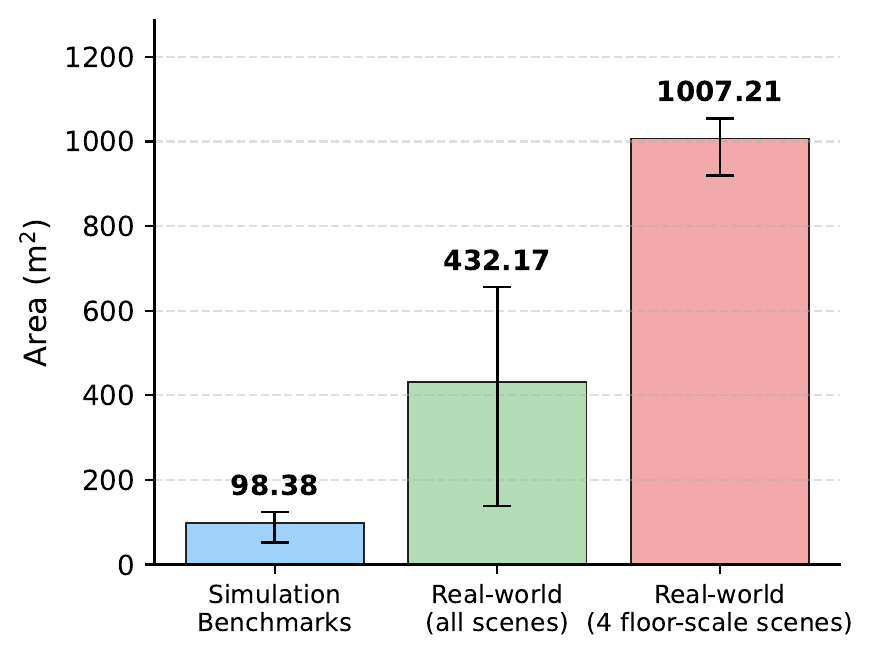

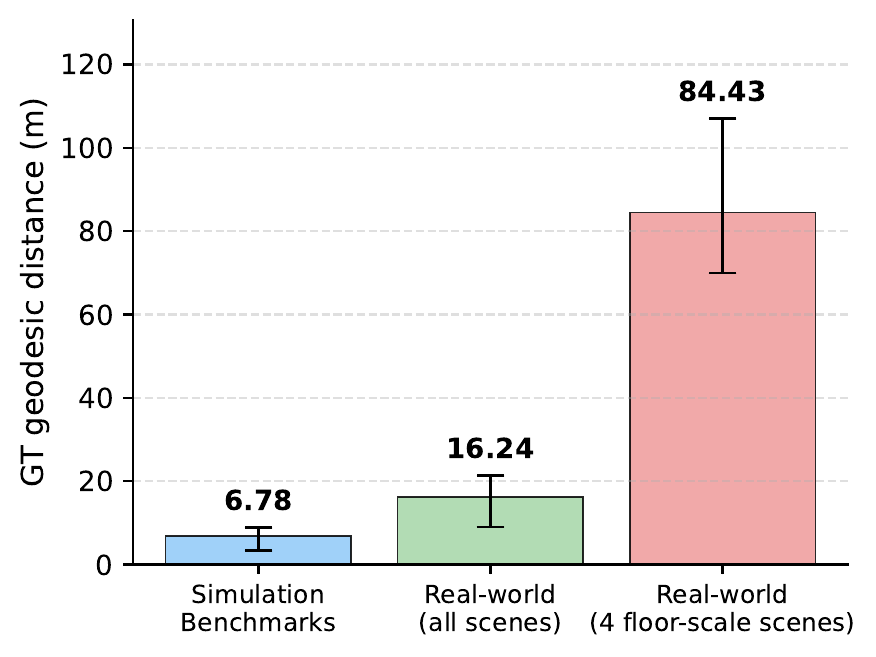

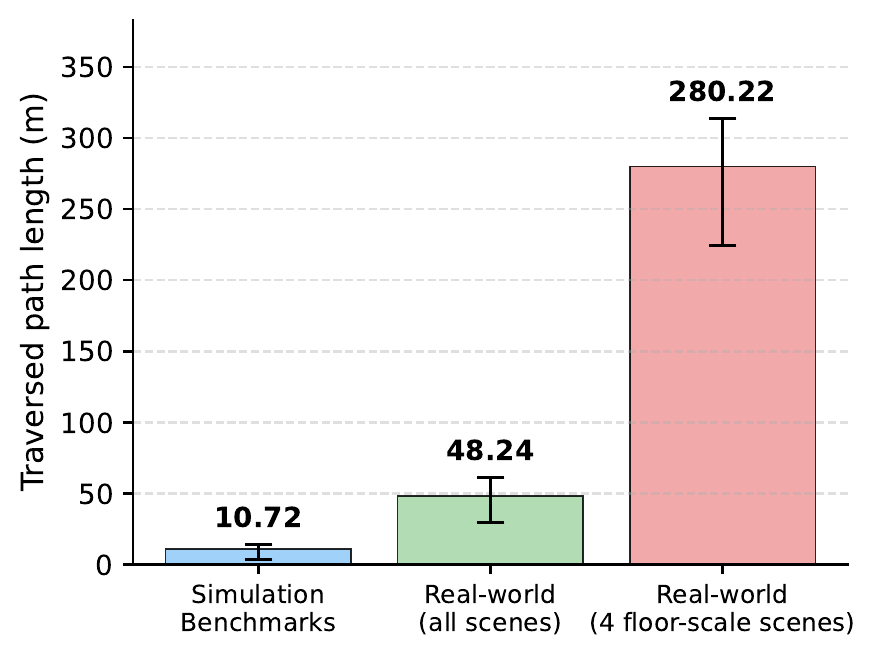

- We conduct extensive evaluations including 190 real-world experiments across three robot embodiments, achieving 4-5x improvement in navigation efficiency over existing baselines, and evaluate on four simulation benchmarks (HM3D-v1, HM3D-v2, MP3D, and HM3D-OVON), achieving state-of-the-art performance. To the best of our knowledge, this is the first system capable of reliably and efficiently completing object navigation at building-scale.

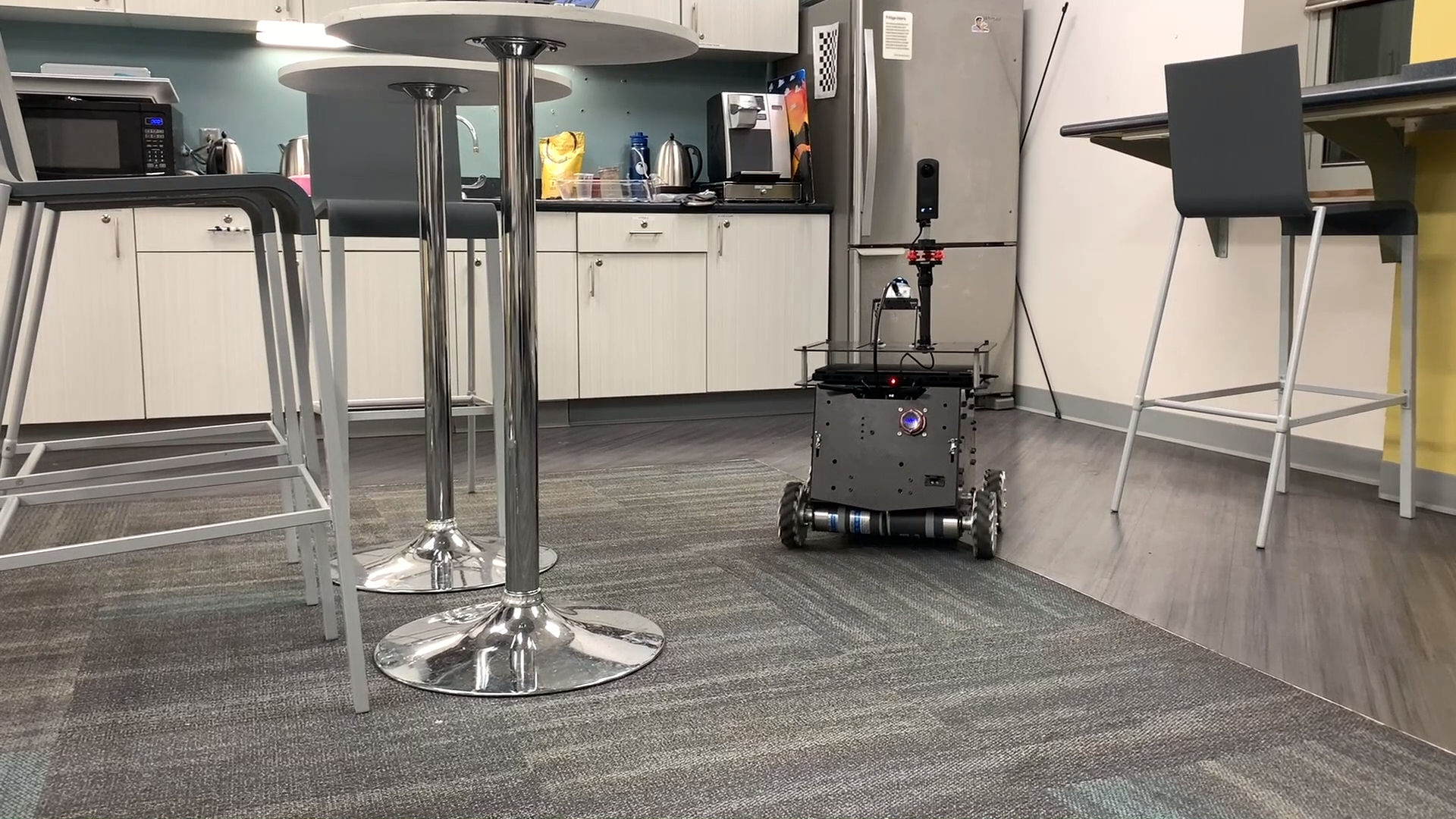

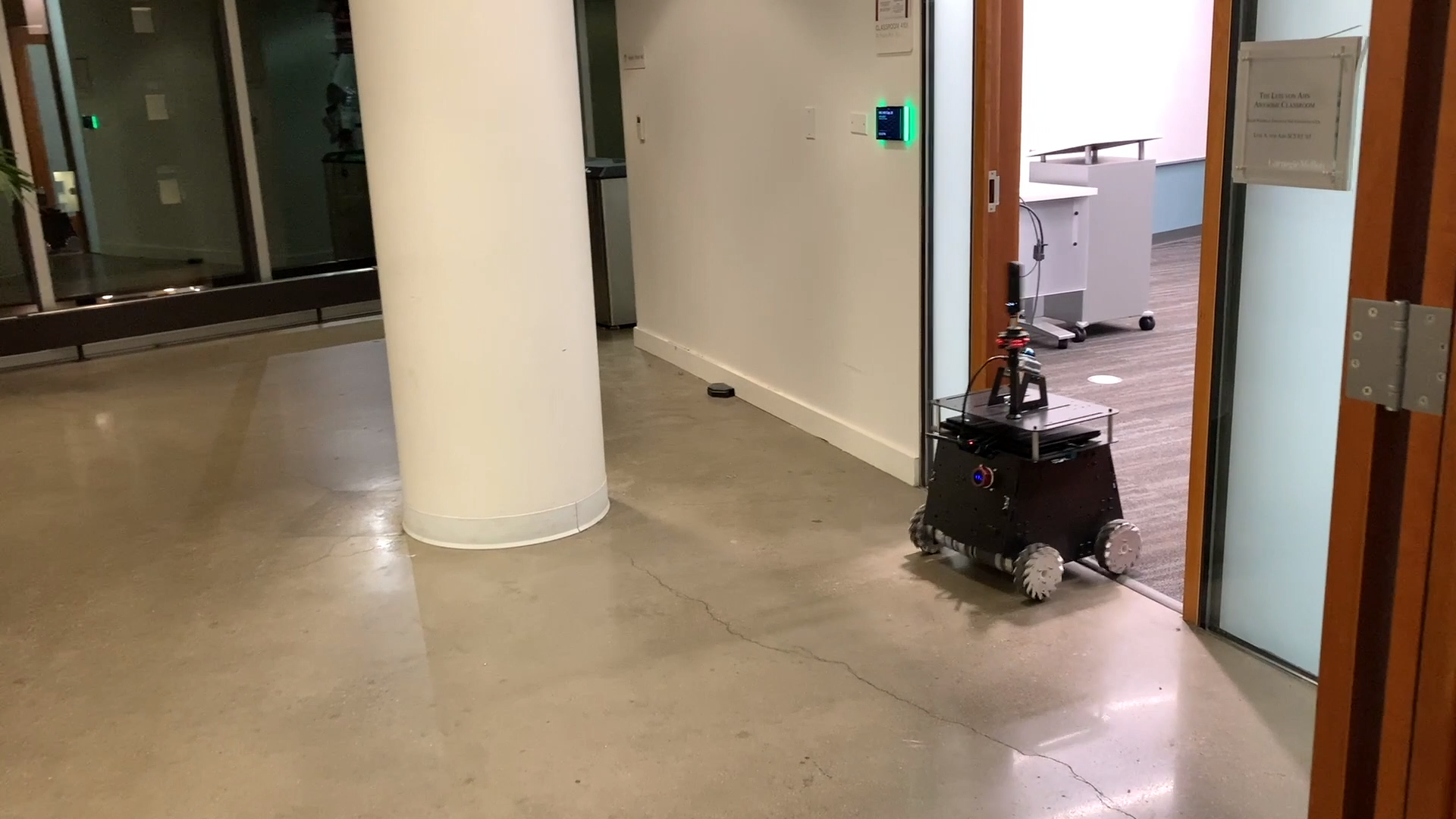

Wheeled Robot:

Wheeled Robot:

Quadruped:

Quadruped:  Humanoid:

Humanoid: